How To Detect Bullshit

By Scott Berkun, August 9, 2006

Everyone lies: it’s just a question of how, when and why. From the relationship saving “yes, you do look thin in those pants” to the improbable “your call is important to us”, manipulating the truth is part of the human condition. Accept it now.

Everyone lies: it’s just a question of how, when and why. From the relationship saving “yes, you do look thin in those pants” to the improbable “your call is important to us”, manipulating the truth is part of the human condition. Accept it now.

I’m certain that given our irrational nature and difficulty accepting tough truths, we’re collectively better off with some of our deceptions. They buffer us from each other (and from ourselves), avoid unnecessary conflicts, and keep the contradictions of our psychologies tucked away from those who don’t need to care. White lies are the spackle of civilization, tucked into the dirty corners and crevices our necessary, but unrealistically inflexible idealisms create. Small lies prop up and support our powerful truths, holding together the insanely half honest, half false chaos that spins the world.

But lies, serious lies, should not be encouraged as they destroy trust, the binding force in all relationships. One particularly troublesome kind of lie is known as Bullshit (BS). These are unnecessary deceptions, committed in the gray area between polite white lies and complete malicious fabrications. BS is usually defined as inventions made in ignorance of the facts, where the primary goal is to protect oneself. The aim of BS isn’t to harm another person, although that often happens collaterally. For a variety of reasons BS can be hard to detect, which is why I’m offering this primer on it’s detection. But be warned: to keep you on your toes there are several bits of BS tucked inside this essay which you will have to find for yourself.

Why people BS: a primer

The first lie in the Western canon comes from the same joyful tome as the first murders, wars and plagues: the Old Testament. Scripture is the source of many things, including lessons in BS with tragicomic value.

The first lie in the Western canon comes from the same joyful tome as the first murders, wars and plagues: the Old Testament. Scripture is the source of many things, including lessons in BS with tragicomic value.

To recap from the book of Genesis, God tells Adam and Eve not to eat fruit from the tree of knowledge, as pretty as it is, for they’ll die. He wanders off to do some unexplained godlike things, as gods are prone to do, leaving the very tempting, and non pit-bull or electrified fence protected, tree out for all to see. Meanwhile Satan slinks by and convinces Eve the fruit is good: so she and Adam have an apple snack (the bible refers to them only as fruit, as apples are one of many of Western culture’s additions). God instantly returns, scolds Adam, who blames Eve; resulting in everyone, snakes, people and all, getting thrown out of Eden forever.

Please note that in this tale nearly everyone lied. God lied[1], or was deceptively ambiguous, about the fruit (they weren’t fatal in any modern sense, as if I told you reading this essay will kill you, you’d expect I meant sometime today – please read the footnote if you’re angered by critiques of scripture). Satan misrepresents the apple’s power, and Adam, approximates a lie in his wimpy finger pointing to Eve. It’s a litany of deception and a cautionary tale: in any book that makes everyone look bad in just a few pages, is it really a surprise how the rest plays out?

People lie for three reasons; the first is to protect themselves. They may wish to protect something they want or need, a concept they cherish, or to prevent something they fear, like confrontation. There is often a clear psychological need motivating every lie.

A well known fib, “the dog ate my homework”, fits the BS model. In the desperate fear driven attempt not to be caught, children’s imaginations conceive amazing improbabilities. Fires, plagues, revolutions, curses, illnesses and absurd reinventions of the laws of physics and space-time have all been summoned by children around the world on the fateful mornings when they find themselves at school, sans-homework. The need to BS is an emotional experience: as logically speaking, the stress of inventing and maintaining a lie is rarely easier than accepting the consequences of the truth.

Which leads to the second reason people lie: sometimes it works. It’s a gamble, but when it works, wow. Did you lie to your parents about girls, boys, fireworks, drugs, grades, or where you were till 2am on a school night? I sure did and still do. My parents still think I’m a famous painter / doctor / professor in London (shhh), and my best friend still believes his high school girlfriend and I didn’t get it on every time I borrowed his car[2]. Even my ever faithful dog Butch used to lie, in his way, by liberating trash from a house-worth of garbage cans, then hiding in his bed, hoping his lack of proximity to the Jackson Pollock worthy distribution of refuse that was formerly my kitchen would be indistinguishable from innocence.

Which gives us the third reason people lie, a truth saints and sinners have known for ages: we want to be seen as better than we see ourselves. Sadly, comically, we also believe we’re alone in both having this temptation, as well as the shame it brings with it (e.g. “We’re not alone in feeling alone“). The secret truth is everyone has moments of weakness: times when fear and greed melt our brains and we’re tempted to say the lies we wish were true. And for that reason the deepest honesty is found in people willing to admit to their lies, or their barely resisted temptations, and own the consequences. Not the pretense of the saints, who pretend, incomprehensibly, inhumanly, to never even have those urges at all.

Ok, enough philosophy: let’s get to detection.

BS detection

The first rule of BS is to expect it. Fire detectors are designed to expect a fire at any moment: they’re not optimists. They fixate on the possibility of fires and that’s why they save lives. If you want to detect BS you have to engage in skepticism, and add doubt to everything you hear. Socrates, the father of western wisdom, based his philosophy around the recognition, and expectation, of ignorance. It’s far more dangerous to assume people know what they’re talking about, than it is to assume they don’t and let them prove you wrong. Be like Socrates: assume people are unaware of their own ignorance (including yourself) and politely, warmly, probe to sort out the difference.

The first rule of BS is to expect it. Fire detectors are designed to expect a fire at any moment: they’re not optimists. They fixate on the possibility of fires and that’s why they save lives. If you want to detect BS you have to engage in skepticism, and add doubt to everything you hear. Socrates, the father of western wisdom, based his philosophy around the recognition, and expectation, of ignorance. It’s far more dangerous to assume people know what they’re talking about, than it is to assume they don’t and let them prove you wrong. Be like Socrates: assume people are unaware of their own ignorance (including yourself) and politely, warmly, probe to sort out the difference.

The first detection tool is a question: How do you know what you know?

Throw this phrase down when someone force feeds you an idea, an argument, a reference to a study or over-confidently suggests a course of action. People so rarely have their claims challenged, that asking someone to explain how they know something sheds light on whatever ignorance they’re hiding. It instantly diminishes the force of a BS driven opinion. It works well in response to the following examples:

- “The project will take 5 weeks“. How do you know this? What might go wrong that you haven’t accounted for? Would you bet $10k on this claim? $100k? What if we double the budget or cut something?

- “Our design is groundbreaking.” Really? What ground is that? And who, besides the designers/investors, has this opinion?

- “Studies show that liars’ pants are flame resistant..” What studies? Who ran them and why? Did you actually read the study or a two sentence press clipping (poorly) explaining the results? Are there any studies that claim the opposite?

When you ask “how do you know what you know?” often they can’t answer quickly. Even credible thinkers need time to sort through their logic, separating assumptions from facts: an exercise that works in everyones favor (the fancy word for this subject is epistemology. I wish there was a word like epistemologize, to describe when you challenge someone’s epistemological basis for something).

Of course it’s fine to hear: “This is purely my opinion based on my experience” or “It’s a guess, as we have no data”, but those are more honest claims that most people, especially if they’re making things up, typically make. Identifying someone’s position as opinion, informed or not, disarms the threat of most kinds of BS.

The second tool is also a question: What is the counterargument? Anyone who has seriously considered something will have seen enough facts to fit their current argument as well as alternative position: ask for them. It’s a grade school assignment, intended to show there are many reasonable ways to interpret the same set of facts. However, someone who is bullshitting you won’t have researched or thought through anything: they’re making things up. Asking for the counter argument will force them to either back up their position, or to end the discussion until they’ve done due diligence. (If they claim there is no counter argument, end the discussion. They are not only BS’ing you, they think you’re a moron).

Similarly useful questions include: Who besides you shares this opinion? What are your biggest concerns, and what will you do to address them? What would need to change for you to have a different (opposite) opinion?

Time & Pressure

A good thought holds together. Its solid conceptual mass maintains its shape no matter how much you poke, probe, test and examine. But BS is all surface. Like a magician’s bouquet of flowers, it’s pretty as it flashes past your eyes, but its absence of integrity become obvious when you hold it in your hands. Anyone creating BS knows this, and will tend towards urgency. They’ll resist reviews, breaks, consultations or the suggestion of sleeping on decisions before they’re made.

Use time & pressure, the third tool of BS detection, in your favor: never allow big decisions to be mismanaged to the point where they must be made urgently. Ask to withhold judgment for a day, and watch the response. Invite people with expertise you need but don’t have to participate in decisions to add intellectual and domain pressure (Hiring them if necessary. The $500 you pay a lawyer, accountant or consultant to review something effectively becomes a well spent BS insurance fee).

Be a leader in creating an environment unpleasant for BS. If everyone knows the gauntlet of friendly, but rigorous, intellectual curiosity claims must run through, BS will be discouraged while still in the minds of the tempted.

Confidence in reduction

Especially in business and technology, jargon and obfuscation hide huge quantities of BS. Inflated language is a technique of intimidation. The bet is that if you don’t understand what they’re talking about, you’ll feel stupid, or distracted, and give in to the appearance of their superior knowledge. This is, of course, entirely bullshit. To withstand BS you have to have an inner core of self-reliance, holding on to your doubts longer than the BS’er holds onto their charade.

Especially in business and technology, jargon and obfuscation hide huge quantities of BS. Inflated language is a technique of intimidation. The bet is that if you don’t understand what they’re talking about, you’ll feel stupid, or distracted, and give in to the appearance of their superior knowledge. This is, of course, entirely bullshit. To withstand BS you have to have an inner core of self-reliance, holding on to your doubts longer than the BS’er holds onto their charade.

For example: Our dynamic flow capacity matrix has unprecedented downtime resistance protocols.

If you don’t understand what this means, err on your own side. Don’t assume you’re missing something: assume they are. They’re either hiding something, communicating poorly, or don’t themselves understand what they’re talking about. BS deflating responses include:

- I refuse to accept this proposal until I, or someone I trust, fully understands it.

- Explain this in simpler terms I can understand (repeat if necessary).

- Break this into pieces you can verify, prove, compare, or demonstrate for me.

- Are you trying to say “our network server has a backup power supply?” If so, can you speak plainly next time?

Assignment of trust

The fourth tool of BS detection (derived from the rule of expecting BS) is careful assignment of your trust. Never agree to more than your trust allows. Who cares how confident they are: the question is how confident are you in them? It’s rare that there isn’t time for trust to be earned. Divide requests, projects or commitments into pieces. It’s not offensive to refuse to take someone’s word if they have no history of living up to it before (especially if they’re trying to sell you something).

And trust can be delegated. I don’t need to trust you, if you’ve earned the trust of people I trust. Anyone skilled in the BS arts has obtained that skill through practice, diminishing the odds that many BS-proof people have been successfully deceived by them in the past. Nothing defuses BS faster than a collective of people that help each other detect and eliminate BS. If a team of people witnesses the complete evisceration of someone’s BS few will attempt it again: they’ll know your world is a BS free zone. Great teams and families help each other detect bullshit, both in others and themselves, as sometimes the real BS we need to fear

is our own.

Footnotes

[1] One popular interpretation of Genesis 2:17 is that God meant “you will be mortal” when he said “you will surely die”, so its not a lie – this is in line with the many who believe in the omnibenevolence of god or the perfect nature of the bible. While I question these positions, they are popular views and deserves mention. More to my point, in the context of Genesis, there is no reason Adam could know, when told by God he’d surely die, any of these modern interpretations of God’s words, or the symbolic meaning of all these things, we know now in the present.

[2] This is of course, complete bullshit. I have never lied to anyone ever. I never had a friend, and if I did he did not have a girlfriend or any friends other than me.

The link about apples not being in Genesis was added on 3/6/2012. I’d known about this common erroneous assumption, but didn’t see the need to call it out until now.

The phrase, “or was deceptively ambiguous”, was added 9/25/2006.

The phrase “..they weren’t fatal in any modern sense… ” was added 6/30/2010

References / Notes

- This essay was written long before I became aware of Cognitive Bias, which provides a great foundation for understanding the role our brains play in creating, recognizing or falling victim to BS.

- On Bullshit by Harry G. Frankfurt is a popular book on the subject. It’s a short read, barely 70 pages, but is sadly toned more like a philosophy textbook than the entertaining romp it could have been.

- Carl Sagan’s Baloney Detection Kit

- Why We Lie – Short essay summarizing some basic research into the psychology of lies (LiveScience).

- Web economy and Dilbert’s mission statement generators – If the output of these seem all too familiar, run.

- Why Smart People Defend Bad Ideas – The older, twisted sister to this essay.

- Bullshit entry at wikipedia.

- Web economy bullshit generator – speaks for itself.

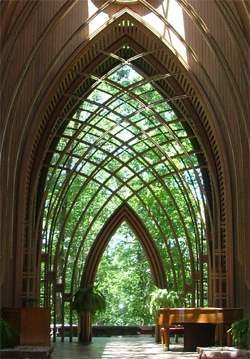

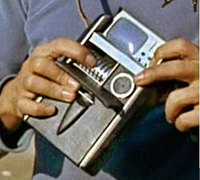

Photo credits: Bullfighting arena, Razorback’s Ozarks, Tricorder, Bulls for sale.

Scott Berkun said, “People lie for three reasons”.

I figure people lie for other reasons, too.

4. They want to help someone temporally or emotionally.

5. They are too lazy to come up with something under Reasons 1 through 4.

I love this essay and all of your writings. And anyone who doesn’t agree with it is probably so consumed by or used to bullshit that they don’t even know the difference.

“And anyone who doesn’t agree with it is probably so consumed by or used to bullshit that they don’t even know the difference.” No need to speak for everyone else. Just yourself.

Why so mean?

I do not agree.

The author used a hypothetical example:

‘Our dynamic flow capacity matrix has unprecedented downtime resistance protocols.’

As technical person I have been confronted with this sort of thing…although I would never be so mealy mouthed.

Flow capacity matrix suggests a combination of vector calculus and linear algebra. Resistance protocols suggests optimization and some number theory derived mathematics.

First of all let me say I am quite reclusive. I do not not care for people very much. In fact, I can be downright obnoxious if only to belittle idiots.

My standardized response to those that question me with dumbed down lay responses is:

Its hardly my problem that you have never bothered to educate yourself above the bare modicum of primary school intellect. Now, why is it that I am required to explain myself when it is you that does not understand introductory science?

On that basis, I expected something more tangible from this article then some explanation of a biblical fairy story…an explanation that appears in plenty of other places.

And yes, I do not get invited to any meetings with clients or other lay people.

This paper was one of my early finds on the academic(s’) literature on the subject and as I have gotten much deeper into bullshit I recently received my hard copy of ‘The Bullshit Factor – the truth about corporate disguises, lies and denials’ by Bellini, James and Kati St Clair. Having read the entire book I am even more impressed than I was after having read only parts of the electronic version on the internet (amazon.co.uk) – I highly recommend this book. While the title implies that it applies to corporations, it is my opinion that the information applies to all organizations, including dysfuctional governments. Bullshit ueber alles!

By Golly! I figured it out!

The Fruit Of Knowledge is a BS Detector that allowed Adam to realize the whole truth of his existence and that he was a slave of God brainwashed to think he was a leader!

The snake or Satan likely had it with the corruption going on and refused to own any slaves so was kicked out of heaven 4 it.

There is another factor. Robert Heinlein (and maybe others) called it the “willing suspension of disbelief”, and used it in reference to science fiction, how you can read it and not be continually going “that isn’t true”. In life, though, the willing suspension of disbelief is fairly common. You want to believe someone, so you do – even if it’s improbable. Think new girlfried/boyfriend. The intent is to make the other person think you trust them, and are therefore trustworthy yourself. That’s how we bullshit without eve saying anything.

Good stuff as always, Scott. I ain’t bullshittin’.

P.S. I follow you through Rss feed.

Any time I hear about a talking snake I automatically detect BS. Just sayin.

OK yet again good article. However I disagree with your interpretation of Genesis. God did not lie. What he meant by you shall surely die(as far as I’ve been taught) is that they spiritually died. They disobeyed God and are therefore seperated by sin from God and this is what is meant by spiritual death. Plus Adam died physically later anyway so draw your own conclusions. I don’t mean to be preachy but and I could be wrong but I have to put my two cents in.

Keep ’em coming

your spot on Trevor

Don’t believe in all the INTERPRETAIONS even our own just

RIGHTLY DIVIDE GODS WORD

Michael there is a logical problem with Trevor’s statement and your concurrence with it. The problem is you are omitting key facts in this case. Said tree from which the fruit was taken was the tree of knowledge of good and evil. Therefore, despite Adam having been forbidden in this instance to partake of the fruit, no evil intent can be ascribed to Adam, because he could not have understood the difference between good and evil prior to partaking of the fruit which would thereafter disclose that knowledge to him. You are in effect naming an act which could have no evil intent ( Adam and Eve did not yet know what good and evil were), as a “sin”. A more fitting term would be transgression, so as to not presuppose any voluntary act of evil. You are in effect saying there are sins that are not evil. If you have followed this far, then you will also have to agree to the point of logic that follows next, that there is no original sin. It is a meaningless term at best, because in the context of Adam and Eve, sin could neither be good or evil until after they partook of the fruit of the tree. Thus the entire basis of orthodox Christianity rests upon the necessity of there being an original sin, which there was not. A right division of this account renders every point that follows after it, meaningless. Original sin is a false doctrine and because it comes before all other doctrines of Christianity, it voids the remaining one out completely.

A book that has just been published by Joe Bennett NZ titled ‘Double Happiness: How bullshit works’ appears to go along with this paper. The description of the book is excellent – if a person is interested in the academic(s’) literature on bullshit this book appears to be must read.

Please add: It is probably BS when an expert has not made the rule change but claims this new rule will be “no problem”.

Dang, I went to a university counselor who gave me terrible advice.

You know, one of those hard to get into college programs, and he told me I was a shoe-in. I wasn’t.

His bosses had changed the rules to allow people with lower scores to get in to achieve diversity. He knew about the rule change but did not know it was about letting less qualified people in, he had believed the BS of his bosses who claimed that “essays and interviews would make sure people could handle the program”.

My instinct was that it was strange that he was telling me what I wanted to hear, but he was the expert so I believed him.

So there is a component of BS you missed: when the ground shifts under the “experts”. Experts have very little chance of predicting the future when they have been BS’ed about changes to their area of expertise.

I should have grabbed onto the rule change and investigated it and investigated it. His bosses would have lied to me, too, though.

Anyway, for everybody here to know: An essay and interview is a way to keep out qualified people for diversity. Apply to lots of schools even if they will cost a lot more.

Ill tell you what BS is, it’s your city waste department refusing to collect your garbage can because it is the wrong shape.

Thanks a bunch for sharing this with all folks you really understand what you are speaking approximately!

Bookmarked. Kindly also visit my web site =). We could

have a hyperlink alternate arrangement among us

you keep telling me i am entered to win millions of dollars but you keep telling me i have to keep entering every day. if that isnt bullshit iam a monkeys uncle which i may be.

Hi scott like this wrighting. I am a inspired wrighter and i am pretty sure i will make my own BS detection like ur article says then ill be like ‘beep-beep- i detect some BS!!!’ to my sister LOL.

then she’ll be like beep-beep-beep-beep-beep-beep-beepand we have like it sounds like a car parking lot or like when drive on new york city people honk honk at you.

Im pretty sure its new york but it might be albany where they do that but anyways ill be like beep-beep!! LOL, thanks for the idea im probably gonna wright a book about this article and ill probably sell it for like 4.99. like i dont want to sell it for too much because then poeple say lohh thats too high price but then if i sell for like 4.99 then there like oh okay and i see like 50 copies. but if i sell for like 10 dollars i only sell like 10 so its obvious make me more rich if i sell for like 4.99. i actually like ending in a 7 so it woud be like 4.97 cause my uncle taught me for every 3 sents that you shave, one more buys so Ill probably sell like 51 then it mkes it smart for me to sell it for 4.97. i dont know what happens if y sell it for like 4.98 though becaue i dont like 8 and people probably be mad because f you think about it the number 8 is like the devils number cause is like 666 = 1/3 of 222 and 2222 is 8. so that scares me but besides that its ok ill probably sell if for just 4.97 so that people dont think im the devil LOL!!!

Hi Scott,

I have been leaving you messages on your posts in character! I just wondered if you like my fictional character? I am trying to develop a sense of person so I can deeper explore the depths of his nature. He has an IQ of just 74, but he is an incredibly deep character with passion and a belief in the world. I am just wondering if you like my character or if you think it still needs work before I begin writing the official book? Your opinion is greatly valued to me. Peter is going to be a bit like Lennie in Of Mice and Men.

Please let me know if you like the character of think I should improve on him.

Thanks,

Allen Wrench

Allen I think you should read Joe Bennett, 2012, Double Happiness: How Bullshit Works before you start writing your book – a visit to bullshitcitynorth might also help get you started – cheers!

Excellent essay, Scott. I know it’s 7 years since you’d written it, but still relevant and hilarious as the day you wrote it!! Thank you for puttin’ it on the ‘Net!

Best indicator that a bullshitter is talking bullshit:

When a speaker makes promises that you know and s/he knows that s/he can’t keep and you know that s/he knows that you know s/he can’t keep and s/he knows that you know; but s/he tries to bullshit anyway.

Some one once told me that the Gods in the heavens, the gods on the mountains and the gods in the ocean did not bother him; however, he could not say the same thing about the God-bureaucracy on earth. His point was that we need to separate the activities of the god-bureaucracy from our own relationship with gods. To me that makes a lot of sense.

Vacillation – a bullshit indicator:

Here is a current example – how can one write that something that makes 4 million citizens sick, is very safe? Wilful blindness?

http://www.phac-aspc.gc.ca/efwd-emoha/efbi-emoa-eng.php

“Estimates of Food-borne Illness in Canada

The Public Health Agency of Canada estimates that each year roughly one in eight Canadians (or four million people) get sick due to domestically acquired food-borne diseases. This estimate provides the most accurate picture yet of which food-borne bacteria, viruses, and parasites (“pathogens”) are causing the most illnesses in Canada, as well as estimating the number of food-borne illnesses without a known cause.

In general, Canada has a very safe food supply; however, this estimate shows that there is still work to be done to prevent and control food-borne illness in Canada, to focus efforts on pathogens which cause the greatest burden and to better understand food-borne illness without a known cause.”

No doubt there are many human beliefs but there is only one reality. One truth. I hold that truth to be everything written in the Bible. Why? Take a look at Israel. In the Bible, Israel is God’s chosen people. Prior to 1948, non-believers would say “here is what proves your Bible false. Israel has no land, no army, no government, and no LANGUAGE.”(Hebrew, the jewish language, actually had to be reconstructed) Jerusalem was captured by the Romans around 90 A.D and the Jews went into exile for more than 1800 years, until they became a nation in 1948. Fulfilling scriptures (prophecies). They were in exile longer than Rome was an empire. Both east and west combined. Here are the scriptures concerning this; Ezekiel 37:21-22, Amos 9:14-15, Ezekiel 37:10-14, Isaiah 66:7-8, Jeremiah 16:14-15, Ezekiel 34:13, Jeremiah 31:10.

p.s it’s no secret that the Bible was written by man but that does not mean it is mans word. From Genesis to Revelation, you can tell it is written by one author. I also used to make the defense “the Bible is written by man!” but then I actually read it. I hope whoever reads this comment, researches evidence for and against the God of the Hebrew Bible from trustworthy sources. I also ask that you enter that quest with no verdict already in your mind, but as though this were the first time you heard about this.

There are plenty of people who disagree with you, and each other, on this, which means my website is one of the worst places on the web to debate matters of biblical scholarship. Plenty of excellent references and people to argue with here: http://en.wikipedia.org/wiki/Authorship_of_the_Bible

Hi Scott berkun

He terrorist, get the hell outta here

Why, this article is the biggest bunch of bullshit I’ve ever read!

Just kidding. I enjoyed it and found it spot-on!

I’ve to say that this article is full of great information. This is a great exaggerated title I came across today! :)

Loved this, Scott! Especially your starting with oft-ignored “How Do You Know You Know Something is True?” Most of population would run if you used the word “epistemology.” Thanks for presenting concept in everyday terms sort of like Pogo’s Owl did when he remarked “You may as well quit your thinkin. It ain’t improving your talkin.”

Too many still ignore this question and the possibility of its having differing answers. Even with the world horrified at some results of conflicting

bases of “knowing what is true.”

More power to you!

is this site an afterthought or are the various capitalizations of god purposeful?

why do people bullshit. Google works then, a complete and load of utter bullshit.

Bulshitt????

Absolutely.

Was this essay supposed to be serious? I think it was loaded with BS right at the beginning.

I detect bullshit on this website. Origin of said bullshit: Scott Berkun.

Hi, Scott. Last year, I enjoyed reading your blog. My book is a word survival guide for everyday use, for readers less literate than yours. It’s a translation of essential ways to evaluate incoming and self-imposed BS. I worked in NYC pr, then did grad work at NYU with Hayakawa (my idol) then taught at S.M.U.

Would be delighted to have you look at the resulting book: B.S. Detecting, the Flip Side of Success-Possible Communicating. Want a free Mobi?

What does Google lie about?

Very nice.

One has to avoid becoming the sort who thinks everything out there is bullshit, a scam, or whatever else in that field, though. Sort of like the cynical old man or the arrogant young person who thinks everything besides their own perception of what is legitimate is garbage.

In the end, I don’t waste time trying to detect people’s garbage. Considering what I do, it’s not worth it, and it makes no difference to me anyways. I’ve known all sorts of people who spend a huge amount of time trying to find out if something is true or not. Usually, it seems to be on things that are of no significance to themselves.

Just breathe and live your life.

I DONT WORSHIP GOD SATAN BIBLE NECROMECAN OR ADAM OR EVE OR SNAKES APPLES BECAUSE I DONT BELIVE IN LIES WHISPERING ECHOES WHITE NOISE JUST AS ITS ALL A PILE OF BULLSHIT AS U SAY YOUR RIGHT THANKYOU UVE MADE MY DAY TELL ME MORE IM INTRUIGED 4 EVER N EVER YOUR THE SECOND TRUTHTELLER IVE FOUND 2DAY AND I NEED TO FIND 5 MORE WONT EVEN BOTHER WITH 666 CAUSE IT BULLSHIT AS IS 999 9111 69 AND 96